Apple Intelligence is Apple’s take on AI, and it looks to fundamentally change the way we interact with technology, blending advanced machine learning and AI capabilities into everyday devices.

Promising more conversational prose from Siri, automated proofreading and text summarization across apps, and lightning-fast image generation, Apple’s AI ecosystem is designed to enhance user experiences and streamline operations across its product lineup. Here’s everything you need to know about Apple’s transformational new AI.

Apple Intelligence release date and compatibility

Apple Intelligence was originally slated for formal release in September, coinciding with the roll out of iOS 18, iPadOS 18, and macOS Sequoia. However, as Bloomberg’s Mark Gurman reported, Apple subsequently decided to slightly delay the release of Intelligence. It is currently available to developers, though it’s looking unlikely that Apple Intelligence will be released publicly before the 18.1 roll out scheduled for October, per Gurman.

The company has specified that, at least initially, the AI features will be available on the iPhone 15 Pro and 15 Pro Max, as well as iPads and Macs with M1 or newer chips (and presumably the iPhone 16 handsets as well, since they’ll all be running iOS 18). What’s more, the features are only available at launch when the user language is set to English.

Why the cutoff? Well, Apple has insisted that the processes are too intensive for older hardware, as they utilize the more advanced neural engines, GPUs, and CPUs of these newer chips.

Users who run an iPhone 15 Pro or iPhone 15 Pro Max part of Apple’s Developer program gained access to an early version of Intelligence in July with the release of iOS 18.1 beta.

New AI features

No matter what device you’re using Apple Intelligence with, the AI will focus primarily on three functions: writing assistance, image creation and editing, and enhancing Siri’s cognitive capabilities.

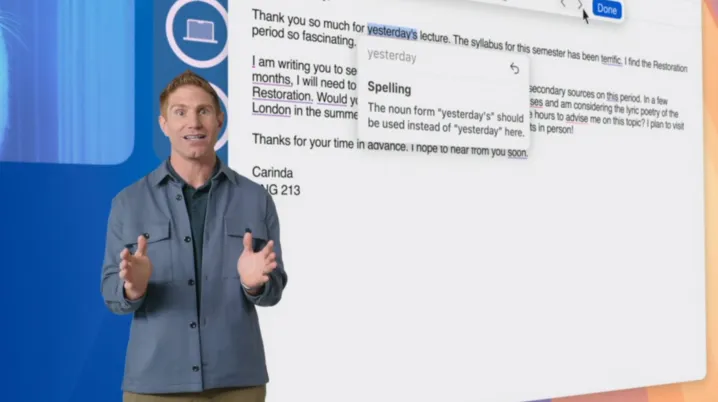

Writing Tools

For example, within the Mail app, Apple Intelligence will provide the user with short summaries of the contents of their inbox, rather than showing them the first couple lines of the email itself. Smart Reply will suggest responses based on the contents of the message and ensure that the reply addresses all of the questions posed in the original email. The app will even move more timely and pertinent correspondence to the top of the inbox via Priority Messages.

The Notes app will see significant improvements as well. With Apple Intelligence, Notes will offer new audio transcription and summarization features, as well as an integrated calculator, dubbed Math Notes, that solves equations typed into the body of the note.

Image Playground

Image creation and editing functions will largely be handled by the new Image Playground app, wherein users will be able to spin up generated pictures within seconds and in one of three artistic styles: Animation, Illustration, and Sketch. Image Playground will exist as a standalone app, with many of its features and functions integrated with other Apple apps like Messages.

Apple Intelligence is also coming to your camera roll. The Memories function in the Photos app was already capable of automatically identifying the most significant people, places, and pets in a user’s life, then curating that set of images into a coherent collection set to music. With Apple Intelligence, Memories is getting even better.

The AI will select the photos and videos that best match the user’s input prompt (“best friends road trip to LA 2024,” for example). it will then generate a storyline — including chapters based on themes the AI finds in the selected images — and assemble the whole thing into a short film. Photos users will also gain access to Clean Up, a tool akin to Google’s Magic Eraser, and improved Search functions once Apple Intelligence clears the public beta.

Siri

Perhaps the biggest beneficiary of Apple Intelligence’s new capabilities will be Siri. Apple’s long-suffering digital assistant will become more deeply integrated into the operating system, with more conversational speech and improved natural language processing.

What’s more, Siri’s memory will be more persistent, allowing the agent to remember details from previous conversations, while the user will be able to more seamlessly switch between spoken and written prompts.

Apple Intelligence privacy

Most of Apple Intelligence’s routine operations will be handled on-device, using the company’s most recent generations of A17 and M-family processors, said Craig Federighi, Apple’s senior vice president of Software Engineering, during WWDC 2024. “It’s aware of your personal data, without collecting your personal data,” he added.

“When you make a request, Apple Intelligence analyzes whether it can be processed on-device,” Federighi continued. “If it needs greater computational capacity, it can draw on Private Cloud Compute and send only the data that’s relevant to your task to be processed on Apple silicon servers.” This should drastically reduce the chances of private user data being hacked, intercepted, spied upon, and otherwise snooped while in transit between the device and PCC.

“Your data is never stored or made accessible to Apple,” he explained. “It’s used exclusively to fulfill your request and, just like your iPhone, independent experts can inspect the code that runs on these servers to verify this privacy promise.”

Apple Intelligence’s ChatGPT partnership

Apple Intelligence won’t be the only cutting-edge generative AI taking up residence in your Apple devices come this fall. During WWDC, Apple and OpenAI executives announced that the two companies are launching a partnership that will see ChatGPT functionality (powered by GPT-4o) — including image and text generation — integrated into Siri and Writing Tools. Like Apple Intelligence, ChatGPT will step in if Siri’s onboard capabilities aren’t sufficient for the user’s query, except that ChatGPT will instead send the request to OpenAI’s public compute cloud rather than the PCC.

Apple Intelligence trained on Google’s Tensor Processing Units

A research paper from Apple, published in July, reveals that the company opted to train key components of the Apple Intelligence model using Google’s Tensor Processing Units (TPUs) instead of Nvidia’s highly sought-after GPU-based systems. According to the research team, utilizing TPUs allowed them to harness enough computational power needed to train its enormous LLM, as well as do so more energy efficiently than they could have using a standalone system.